Platform / Update

The Technology Behind Herb Hub 365

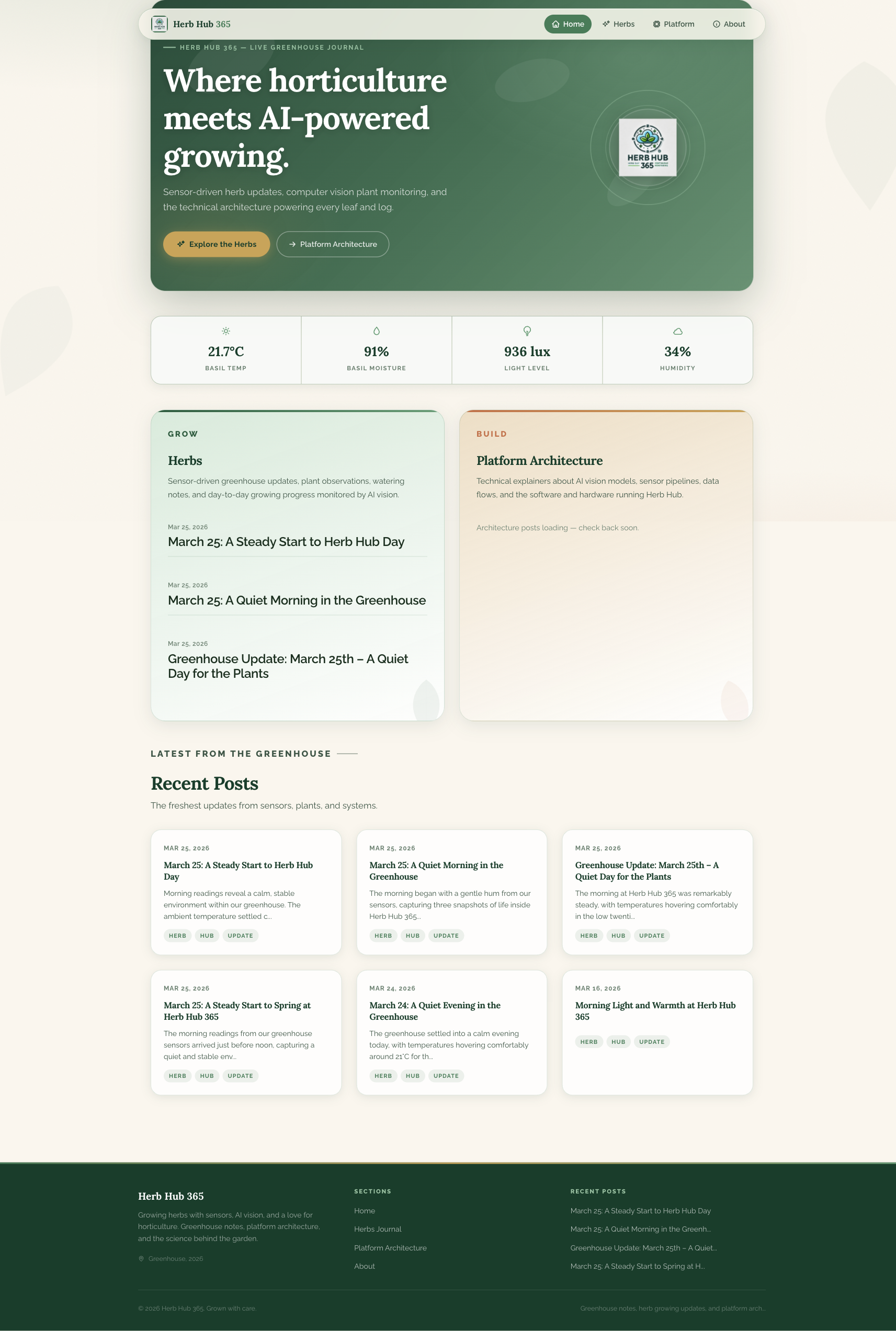

Herb Hub 365 has grown from a simple greenhouse monitor into a small but fairly involved software platform. This post is a transparent look at everything that goes into running it — the languages, services, tools, and external integrations that combine to keep the greenhouse observed, narrated, and published each day.

Stack at a Glance

Languages & Runtimes

Frontend & Content

Infrastructure & Messaging

AI & Media

Cloud & IaC

Languages and Runtimes

The platform is built across four languages. Go forms the backbone of all eight microservices, running on version 1.23.4 for most services and 1.25.0 for the watering controller. Go was chosen primarily for its straightforward concurrency model, fast startup time in containers, and the ability to ship self-contained static binaries with no runtime dependencies.

The public-facing site is generated by Jekyll, running on Ruby 3.3. A small Python 3 script handles low-level GPIO relay control for the physical watering hardware, using the gpiod library to communicate directly with the Raspberry Pi’s GPIO pins. Bash scripts tie together scheduled tasks, queue setup, and sensor data collection at the edges of the system.

The Eight Microservices

Each service has a single, clearly bounded responsibility.

Image → MP4 · ffmpeg · stdlib only

MuseTalk · Kokoro TTS · NVENC/x264

YouTube Data API v3 · OAuth 2.0

Ollama · Gemma 4 · stdlib only

Prometheus · LLM · Git · RabbitMQ

Kokoro TTS · MP3 · cron

Orchestration · RabbitMQ · stdlib only

GPIO · Prometheus · RabbitMQ · Go 1.25

timelapse-builder scans a directory of timestamped images, applies optional date range and brightness filters, and calls ffmpeg to produce an MP4. It exposes a REST API on port 8082 and enforces a single concurrent build at any time. It has no external Go dependencies — the entire service uses the standard library only.

video-narrator takes a script and produces a talking-head video by coordinating MuseTalk for avatar lip-sync and Kokoro TTS for voice synthesis. On machines with a compatible NVIDIA GPU it uses ffmpeg’s h264_nvenc encoder for significantly faster output; on CPU-only machines it falls back to libx264.

video-publisher handles the YouTube side of things. Once a video is ready it uses the YouTube Data API v3 via Google’s official Go client, including OAuth 2.0 authentication, to upload the file with the correct title, description, tags, and privacy settings.

llm-service is a thin HTTP wrapper around Ollama, the local LLM inference server. It abstracts model selection and request formatting so that other services can generate text without caring which model is running underneath. The default model is Gemma 4.

blog-poster is the most compositional service. It queries Prometheus for recent sensor metrics, requests a draft from llm-service, pulls a matching timelapse image, assembles the final Markdown post, and commits it directly to the Jekyll repository so that the site redeploys automatically.

tts-narrator converts written blog posts into audio narrations by sending requests to the Kokoro TTS API, producing MP3 files that are surfaced via the site’s audio player.

herbhub-manager acts as the central coordinator, watching for new video outputs over RabbitMQ and triggering associated blog post generation. It exposes a small HTTP API on port 8080.

watering monitors soil moisture sensors via Prometheus, publishes telemetry to RabbitMQ, and triggers GPIO relay pulses through the Python relay wrapper when plants need watering.

Messaging and Scheduling

Services communicate asynchronously through RabbitMQ, running the 3-management image so the full management UI is always available. The primary queues are sensor.snapshots, which carries telemetry data from the greenhouse, and video.produced, which signals that a new video is ready for downstream processing. A dead-letter queue sits alongside the main video queue to catch failed deliveries.

Scheduled work is handled by Cronicle (v1.14.2), a self-hosted web-based job scheduler. It drives the recurring sensor snapshots, timelapse builds, and nightly post generation without requiring cron entries spread across multiple machines.

Infrastructure and Networking

All services run as Docker containers, defined in a single Compose file with an optional GPU variant for the video narrator. Traefik sits in front of everything as the reverse proxy, handling HTTPS termination with certificates provisioned automatically via Let’s Encrypt. Each internal service is reachable through a subdomain — for example, timelapse.herbhub365.com routes directly to the timelapse builder’s API.

Container and system metrics are collected by cAdvisor and scraped by a Prometheus agent running in agent mode, which forwards all data to a remote Prometheus instance at the home lab. A Homepage dashboard gives a single-pane view of service health.

The Public Site

The site itself is a Jekyll 4.3.4 static site using the Minima theme with a heavily customised layout and CSS. It is deployed to Azure Static Web Apps via a GitHub Actions workflow that triggers on every push to main. The build and deployment typically completes in under two minutes. Content Security Policy headers are configured in staticwebapp.config.json and applied at the CDN edge.

External Services and APIs

Beyond the self-hosted stack, the platform touches a small number of external services. YouTube receives finished videos through the Data API. Kokoro TTS and MuseTalk are hosted on the home lab network rather than the public internet. Azure hosts the static site and the Terraform state backend. Prometheus remote write carries metrics off-device for longer-term retention.

Secrets — YouTube OAuth tokens, Git personal access tokens, RabbitMQ credentials, and SSH keys for Cronicle — are injected via environment variables at container start rather than being baked into images.

Media Processing

ffmpeg does the heavy lifting for all video work: compositing timelapse sequences, concatenating narrator segments with intro and outro clips, and controlling output quality through CRF settings. ImageMagick is used selectively for brightness analysis during timelapse frame filtering, though the plan is to eventually replace that with ffmpeg’s native blackframe filter to avoid spawning a separate process per image.

Infrastructure as Code

The Azure infrastructure — resource groups and the static web app — is defined in Terraform using the azurerm provider, with state stored in an Azure Storage Account. This keeps the cloud footprint reproducible and auditable, even though it currently covers a relatively small surface area.

The stack is intentionally practical rather than fashionable. Go for services, Jekyll for content, Docker Compose for orchestration, and a preference for self-hosted tooling wherever it makes sense. As the platform evolves the aim is to keep the dependency surface small and the individual components easy to reason about in isolation.