Platform / Update

The Hardware Behind Herb Hub 365

Every data point, timelapse frame, and narrated video that appears on this site starts with a piece of physical hardware sitting in or near the greenhouse. This post is a tour of the machines, boards, and sensors that make up the Herb Hub 365 infrastructure — what each one does, why it was chosen, and how they connect.

The Fleet at a Glance

Image Capture

Raspberry Pi 3B · Cortex-A53 · 1 GB RAM

Pi AI Camera · WiFi · Debian 13

Hardware Control

Raspberry Pi 3B · Cortex-A53 · 1 GB RAM

Relays · Sensors · Docker · GPIO · WiFi

Orchestration & Compute

Raspberry Pi 400 · Cortex-A72 · 4 GB RAM

Docker · NFS · 118 GB SD · Ethernet

AI Compute

Xeon E5-2650 · 32 threads · 31 GB RAM

RTX 3060 12 GB · Ubuntu 24.04

Shared Storage

Synology · 172.16.99.17

1.5 TB video storage · NFS exports

hh-01 — The Eyes of the Greenhouse

The first node is a Raspberry Pi 3 Model B tucked inside the greenhouse, running Debian 13 (Trixie) on a 15 GB microSD card. Its sole purpose is image capture. Connected via WiFi on the 172.16.108.x network, it runs without Docker — a deliberate choice to keep its footprint minimal and its uptime reliable.

The camera is a Raspberry Pi AI Camera, a Sony IMX500-based module with an on-board neural processing unit. For the timelapse use case, the AI acceleration goes largely unused — what matters is consistent, high-quality image output on a configurable schedule. Captured frames are written to a local directory that is exported over NFS and mounted by hh-03, meaning the timelapse builder service can access fresh images without any data transfer scripts.

At the time of capture the machine was running cool at 47°C SoC temperature and had been up for over eight days without a restart — a fair reflection of how little it needs to do.

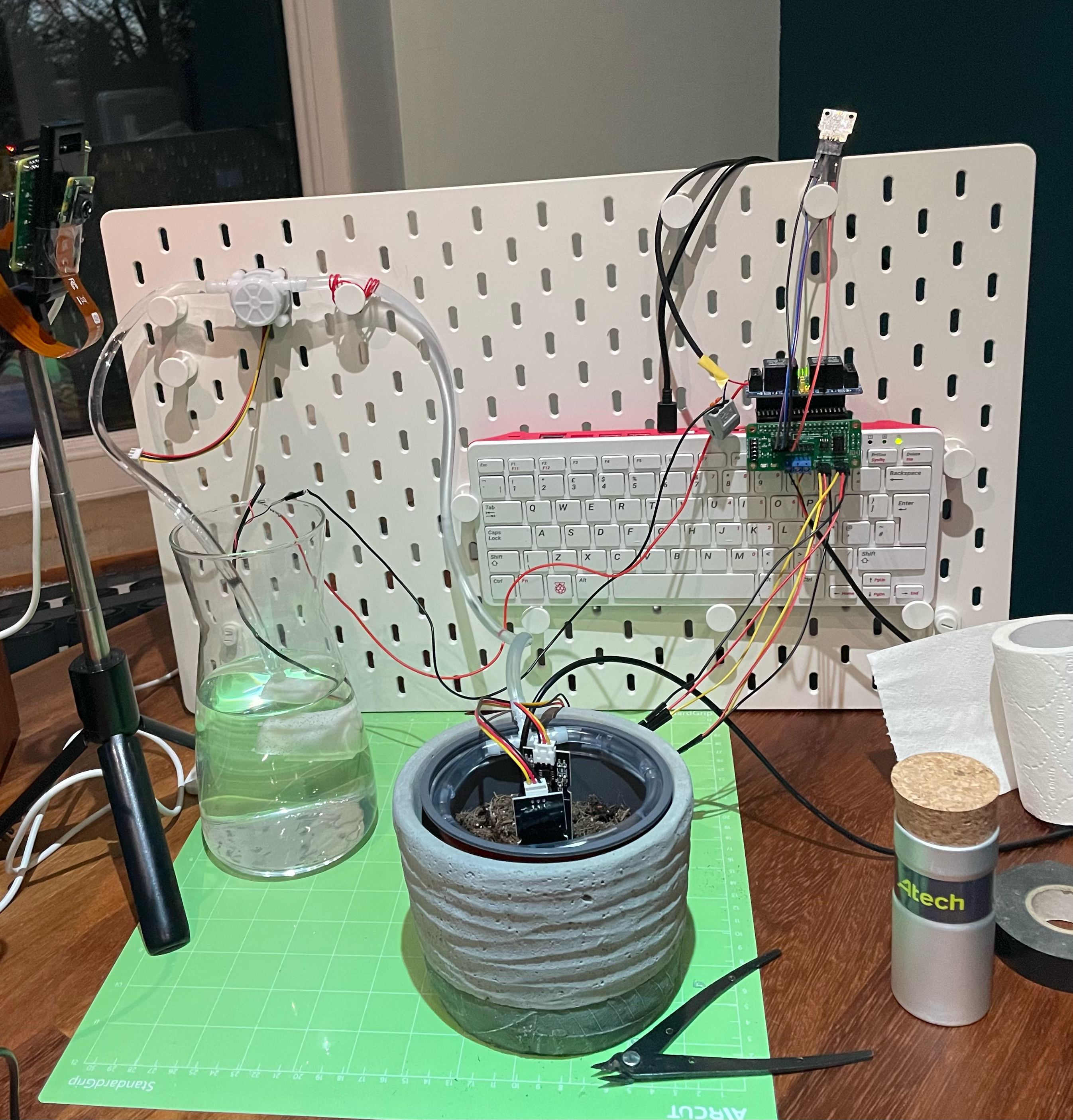

hh-02 — The Hands

The second Pi 3 Model B carries a very different workload. Where hh-01 only observes, hh-02 acts. It is wired into the physical environment via its GPIO pins and runs the Go services responsible for automated watering and sensor reading.

The attached hardware includes:

- 4-channel relay module — switches 12V circuits to open and close the solenoid valves that control water flow to each plant bed. The relay channels map directly to the GPIO pins driven by the watering Go service.

- Capacitive soil moisture sensors — one per plant zone, returning analogue readings that are digitised and published to the Prometheus metrics endpoint. Capacitive sensors were chosen over resistive ones for longevity in a damp soil environment.

- DS18B20 waterproof temperature probes — stainless steel, one-wire protocol, reading soil temperature directly at root level rather than ambient air. The 1-Wire protocol means multiple sensors can share a single GPIO pin with unique device addresses.

- BMP280 breakout (Pimoroni) — mounted at canopy level, reading ambient air temperature, barometric pressure, and altitude. This provides the greenhouse climate data that appears in the daily sensor snapshot posts.

Docker 29.2.1 is installed on hh-02, which runs a small number of service containers. The machine connects wirelessly on the same 172.16.108.x subnet as hh-01, keeping both sensing nodes on the same WiFi segment.

hh-03 — The Brain

The orchestration node is a Raspberry Pi 400 — the keyboard-integrated form factor that packs a Cortex-A72 quad-core processor and 4 GB of RAM into a compact desktop unit. Compared to the 3B nodes it is noticeably more capable: faster CPU, four times the memory, and a 118 GB microSD card with room to grow.

hh-03 is the only Pi connected via wired Ethernet (172.16.106.153), which gives it a stable, low-latency path to the NAS and the wider LAN. This matters because it is the machine that runs the main Docker Compose stack — the RabbitMQ broker, Traefik proxy, blog-poster, herbhub-manager, tts-narrator, video-publisher, and timelapse-builder services all live here.

It mounts two NFS shares from the NAS at 172.16.99.17: one for video output and one for production resources like avatar files and intro clips. It also mounts hh-01’s timelapse directory over NFS, giving the timelapse-builder service direct read access to camera frames as if they were local. The result is a clean separation: hh-01 writes images, hh-03 reads and processes them, without either machine needing to coordinate the transfer explicitly.

At the time of capture hh-03 was running at a comfortable 37°C with two active Docker bridge networks visible in the interface list, indicating the core service stack was up.

lin-hp — The Muscle

Not strictly a Herb Hub node, but providing the compute that makes the AI-driven features possible. lin-hp is an x86 tower running Ubuntu 24.04, built around an Intel Xeon E5-2650 — an eight-core, sixteen-thread server CPU running at 2.0 GHz with 31 GB of ECC RAM. It is the machine that would have been decommissioned from a server rack somewhere before being repurposed for the home lab.

The critical component is the NVIDIA GeForce RTX 3060 with 12 GB of VRAM. This is what enables real-time avatar video generation via MuseTalk and fast LLM inference via Ollama (currently running Gemma 4). Without the GPU, video narration would be feasible but slow; with it, a two-minute narration video renders in roughly the time it takes to watch it.

lin-hp mounts the same NAS NFS shares as hh-03 so that completed videos land directly on shared storage, accessible to the publisher service running on hh-03 without any additional file transfer step.

Shared Storage — The NAS

Sitting at 172.16.99.17 is a Synology NAS providing the shared storage layer that ties the cluster together. Two NFS exports are mounted across both hh-03 and lin-hp: one for video output and one for production resources. With 1.5 TB of video data already accumulated and roughly 1.2 TB free, there is comfortable headroom for continued daily recordings.

The NAS is intentionally kept out of the compute path. It stores and serves files; all processing happens on the Pi and x86 nodes. This keeps the NAS simple, reliable, and easy to back up independently.

How It Fits Together

captures frames

exports via NFS

runs services

builds timelapse

reads sensors

controls watering

AI inference

video generation

The two Pi 3B nodes handle the physical world at the edge. hh-01 watches and records; hh-02 listens to the soil and responds with water when needed. Both run quietly on WiFi, drawing minimal power, with no display attached.

hh-03 is the coordinator. It receives data and images from the edge nodes, runs the service stack, and publishes finished content. It is the only node with a wired Ethernet connection — a deliberate choice for a machine that handles all Docker networking and NFS traffic.

lin-hp sits slightly apart from the cluster, providing AI compute on demand rather than running continuously in the loop. When a video needs generating or a language model needs querying, requests flow across the LAN to lin-hp and results come back as files on shared NFS storage.

The whole thing draws somewhere in the region of 20–30 watts under normal load — less than a single incandescent bulb — which feels appropriate for a greenhouse that is trying to grow things rather than heat a data centre.

</thinking>

</thinking>